ECMWF hosted the last project meeting of the EarthServer-2 project at the beginning of March. The three-year EU-funded Horizon 2020 project explored the use of standardised web services for efficient access to large data volumes. Two standard data-access protocols defined by the Open Geospatial Consortium (OGC), designed to offer web-based access to multi-dimensional geospatial datasets, were explored: (i) Web Coverage Service (WCS) and (ii) Web Coverage Processing Service (WCPS), which is an extension of WCS. The project has enabled ECMWF to better understand the needs of the wider user community and will help to build new, targeted services in the future.

OGC web services

ECMWF already delivers large amounts of forecast data in real time to its users and data from its MARS archive to the meteorological community. These services work well for users in the meteorological community, but businesses and scientists from non-meteorological domains wanting to integrate meteorological data into their services often struggle with domain-specific interfaces, data formats and data volumes. Web services offer easier and customised access to the data: data is accessed via the Internet using a URL and can thus easily be integrated into web or desktop applications and scientific workflows. One of the main advantages of web services is the possibility to retrieve time-series data. Especially users of climate data are interested in retrieving climate information for specific locations. Retrieving time-series information directly saves users from time-consuming downloads and extracting information from large amounts of data.

Web services using OGC standards bring several additional benefits. OGC standards provide data access in a standardised way which is already well established in the world of geospatial information systems (GIS). This makes it easier to exchange or combine data from different data systems. Instead of learning how to access data from different systems, users merely have to learn the structure of an OGC web service request. The same structure is applicable to any OGC web service. An added benefit of using standards is vendor neutrality. Service providers can choose from different solutions to provide a standard web service endpoint.

Data access on demand can easily be integrated into data processing workflows. Data does not have to be downloaded any more but can be accessed directly from the server hosted by the data provider. Data processing or analysis workflows with Jupyter notebooks, for example, can easily be shared and reproduced among team members and colleagues.

Challenges

The project revealed some challenges in offering web services operationally. Data provided by a web service should be described by relevant metadata, for example units of measure. The metadata is ideally taken directly from the source data. Furthermore, a web service should offer suitable data output formats. JSON-based formats are particularly useful for rapid web application development. Output based on a data request should also be accompanied by associated metadata, such as appropriate axis labelling. Some OGC standards allow users to request extensive processing of the data on the server. The management of such an option is quite challenging on an operational system if the system cannot estimate the resource requirements of each request. The project was a chance for ECMWF to explore this scenario.

Developing the MARS rasdaman connection

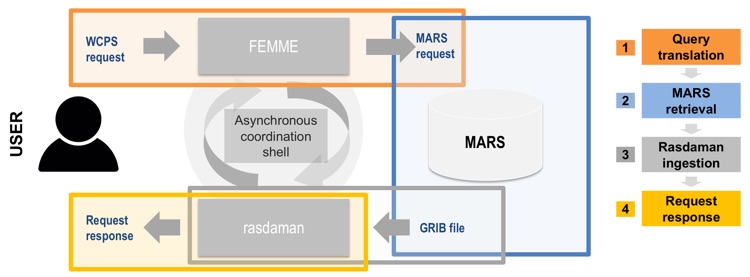

The ERA-Interim reanalysis was chosen to be the first on-the-fly dataset accessible through the MARS-rasdaman interface. Data is retrieved through a multi-step approach that completely abstracts the data retrieval from the MARS syntax. The approach achieves the efficient retrieval of data from MARS by using the rasdaman array-based database technology and FeMME, a metadata manager that hosts metadata to enable the two systems to communicate with each other. The steps can be summarised as follows:

- The user sends a WCS request to the public-facing interface of the service and the request gets translated into a corresponding MARS request.

- The MARS request then gets dispatched internally and placed in the queue.

- The data retrieved from MARS is then registered into the rasdaman database (according to pre-defined and data-dependent configuration files that define how data is mapped).

- Finally, a response to the user is sent as soon as the data is available for download. This way, the user is able to send queries for analysis-ready-data without fetching potentially large GRIB files and the necessity to write explicit requests to the MARS system. At the same time, this approach benefits from the extensive data-processing functionalities of the processing extension (WCPS) of the WCS standard.

Ideally, data retrieved from the archive is cached into the rasdaman database for a pre-determined amount of time. This guarantees that subsequent requests of the same dataset can quickly access the data already ingested in rasdaman without the need for a further (potentially time-consuming) new data retrieval from the archive. This mechanism is built on top of the already existing MARS caching logic and is expected to further improve the response time of a request. More advanced caching policies can be developed as soon as more statistical data about service usage is available.

The advantage of this architecture is twofold. Firstly, the user can interact with the service through plain WCS requests without retrieving (and then post-processing) large amounts of meteorological and climate data. Secondly, there is no need to ingest large amounts of potentially unused data into the rasdaman database. This makes the approach scalable and suitable for larger databases.

For ECMWF it was important to investigate how such a service could be provided for the MARS archive. One of the main obstacles is to link an asynchronous data service (ECMWF's MARS archive) with a synchronous on-demand service (OGC web services). To achieve this, the project tested combining MARS with the rasdaman (raster data manager) array engine, which is designed to work with massive multi-dimensional arrays such as those used in the Earth, space and life sciences (for details, see the box).

As part of the project, EarthServer also supported two hackathons hosted at ECMWF to engage with the user community. It was very useful to see how users were interacting with the OGC web services provided by the project.

While OGC web standards define the interface and interaction, many details of the implementation are left to the service provider. To ensure all services follow the same conventions on how data is accessed and offered, the community needs to agree on a profile. Such a profile for meteorological data offered by WCS (Web Coverage Service) 2.0 is under development with the Met Ocean Domain Working Group of the OGC.

Main project outcomes

The EarthServer-2 project has explored new ways to make ECMWF’s varied and large datasets available to a wider user community. This is important for ECMWF’s core operations as well as its activities relating to the EU-funded Copernicus Earth observation programme. ECMWF participates in the Met Ocean Domain Working Group and will aim to follow their guidelines, such as the MetOcean profile for WCS 2.0.

ECMWF would like to thank all those who tested the project’s OGC Web Coverage Service (WCS) endpoint and provided valuable feedback on the test service during the project. This service will continue to run until June 2018. The lessons of the project will feed into new developments for web services at ECMWF.