ECMWF held a biennial event on the use of high-performance computing in meteorology from 20 to 24 September, bringing together experts from national weather centres, academia and industry.

The theme of this year’s online edition was ‘Towards Exascale Computing in Numerical Weather Prediction’. A total of 313 participants from 43 countries took part in the event. They could follow more than 50 talks, including three keynotes from leading experts in high-performance computing (HPC).

From Earth system model simulations to Digital Twins

The first talk by Nils Wedi (ECMWF) was about performing global weather and climate simulations at 1 km resolution on the Summit supercomputer in the US.

Michael Lange (ECMWF) presented work to prepare ECMWF’s Integrated Forecasting System (IFS) for HPC accelerator architectures. He also outlined steps that need to be taken to ensure the IFS can run efficiently on heterogeneous hardware.

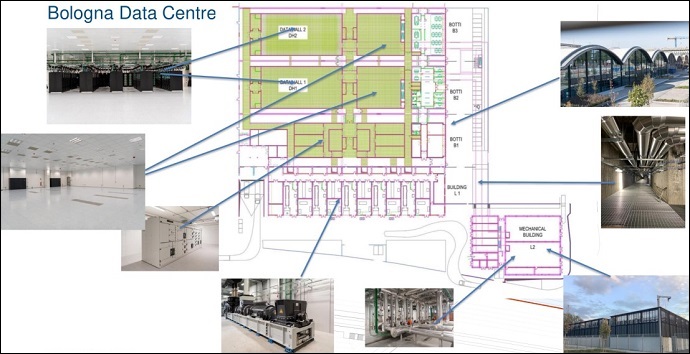

This was followed by a presentation of ECMWF’s Bologna data centre, by Mike Hawkins (ECMWF), followed by a live virtual tour of the new Atos HPC machine being set up there, by Oliver Treiber (ECMWF).

Mike Hawkins presented the ECMWF Bologna data centre layout.

The afternoon session included three talks presenting EuroHPC pre-exascale supercomputers that are due to become operational in the near future.

In addition, Peter Bauer (ECMWF) presented the EU-funded Destination Earth (DestE) programme, and Bryan Lawrence (University of Reading) his vision of transitioning from an Earth system model to a Digital Twin.

Convergence and heterogeneity

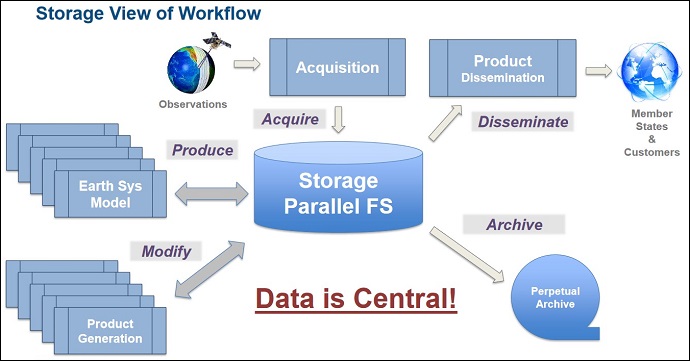

The following day, Tiago Quintino (ECMWF) talked about the convergence of HPC, cloud technology and data analytics for exascale weather forecasting. He pointed out that ensemble datasets are growing quadratically to cubically in size, which calls for data-centric workflows to enable in-situ analysis of the generated datasets.

Data-centric workflows are essential for exascale weather forecasting, according to Tiago Quintino.

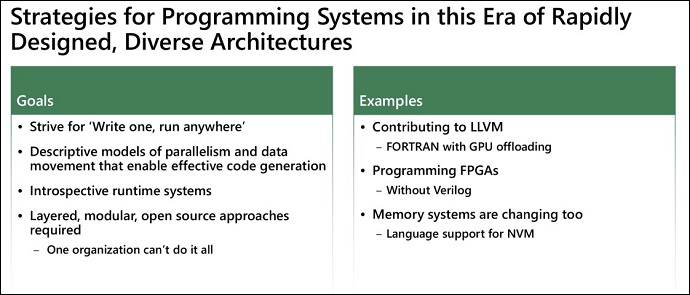

On the same day, Jeffrey Vetter from Oak Ridge National Laboratory gave the first keynote address on ‘Preparing for Extreme Heterogeneity in High Performance Computing’.

He emphasized that the heterogeneous era is here to stay. With ever increasing complexity in the hardware and software landscape, one should strive to create the necessary abstractions to hide the complexity away as much as possible. This can be done using modular and layered approaches and concepts, and descriptive rather than prescriptive models of parallelism.

Jeffrey Vetter gave a presentation on how to prepare for extreme heterogeneity.

Oliver Fuhrer (Allen Institute for Artificial Intelligence) presented the GT4PY Domain Specific Language (DSL) toolchain developed together with ETH Zurich, the Swiss National Supercomputing Centre (CSCS) and MeteoSwiss. He argued that DSLs such as GT4PY are an attractive proposition for the weather and climate community since they allow for a higher level of abstraction that can open the door to more optimizations.

Single precision and resolving storms

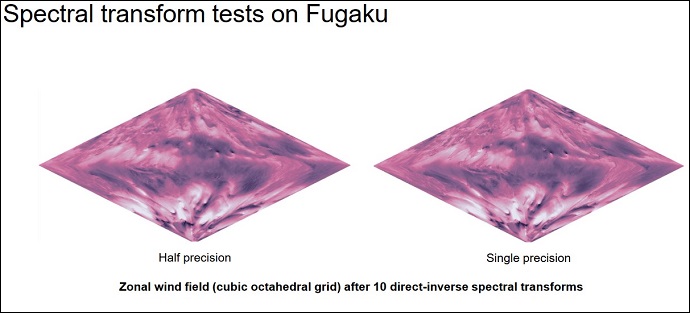

On Wednesday, Sam Hatfield (ECMWF) gave a talk on ‘Single Precision in Earth System Models’. He showed progress in porting the NEMO ocean model to single precision, and the benefit of going even further to half precision. This concerns in particular parts of the IFS, such as the Legendre calculations, where speed-ups of up to 2.5 could be obtained as a result.

Single-precision vs half-precision IFS spectral transforms on the Fugaku supercomputer, by Sam Hatfield.

Richard Loft from the National Center for Atmospheric Research delivered the second keynote on Wednesday entitled ‘Towards an Earth System Model at Storm Resolving Resolutions’.

His main message was that we are at least two generations of HPC hardware away from being able to perform 1 km predictions at 1 Simulated Year Per Day (SYPD), and that, as the machines get larger, a critical factor in procurements will be SYPD per MW.

Richard Loft said that, for weather, we are at least two generations away from 1 km predictions at 1 SYPD.

Fugaku

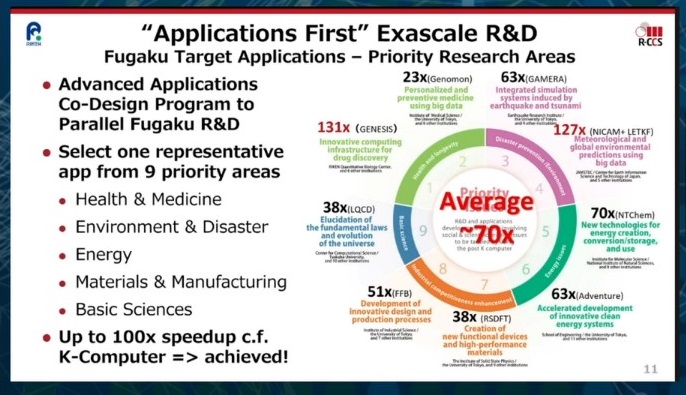

Satoshi Matsuoka from the Riken Center for Computational Science gave the third keynote on Thursday. The talk presented Fugaku, the No. 1 supercomputer in the Top 500 list.

This supercomputer has been designed from the beginning with application performance in mind. Applications from areas such as health and medicine, environment and disaster, energy, materials and manufacturing and basic sciences have been prioritised, and it has been made sure that they are able to perform well on the Fugaku architecture. With a co-design approach, up to a hundred-fold speed-up on certain applications compared to the previous K supercomputer has been achieved.

Satoshi Matsuoka presented the Fugaku supercomputer.

Machine learning

On Friday, Peter Dueben (ECMWF) presented the use of machine learning at ECMWF, and the challenges for machine learning in weather and climate modelling.

He concluded that there are many application areas throughout the ECMWF prediction workflow for which machine learning can make a difference. However, the weather and climate community is still only at the beginning of exploring the potential of machine learning at scale.

Overcoming most challenges in the medium-term future can be achieved by collaborations, scientific studies, shared datasets and software and hardware developments.

See you in Bologna in 2023

The Deputy Director of Computing at ECMWF, Isabella Weger, closed the workshop and announced her retirement from ECMWF after 16 and a half years. She said that the 20th edition of the ECMWF HPC workshop in meteorology will be held in Bologna in 2023.

Further information

More information and recordings of all the talks can be obtained on the workshop web page.