Dr Mark Rodwell, ECMWF Diagnostics Co-ordinator

Methods for scoring ensemble forecasts are generally well established, but it is less clear how to systematically go about identifying the most serious remaining deficiencies in the prediction system. By “most serious deficiencies” I mean those which, if fixed, would lead to the largest improvement in the forecast scores. Our recent article in the Bulletin of the American Meteorological Society (BAMS) tries to address this diagnostic aspect.

The problem for diagnostics

Ensemble weather forecasts are sets of individual forecasts which, together, allow us to estimate probabilities for given “events” (e.g. rain next Tuesday) that are consistent with uncertainties in our knowledge of the current state (of the atmosphere, land surface, ocean, sea ice, etc.). The most appropriate scores for assessing forecast performance are those which are “proper”. Proper scores reward forecasts for their statistical properties of reliability and refinement - thereby discouraging “hedging” and encouraging the development of “sharper” forecasts.

In our article, we focus largely on the reliability aspect, which is sensitive to modelling developments. A forecast system is “reliable” if, when it predicts any given event with probability p, the event occurs, over a sufficiently large set of such forecasts, with outcome frequency q = p. The problem for diagnosis is that it is difficult to relate situations where q ≠ p to a particular modelling deficiency. This is partly because the same forecast probability can arise for different reasons. For example, a 70% probability of rain in five days’ time could, among a myriad of other possibilities, reflect uncertainties in cyclogenesis over the 5-day period, or uncertainties in the triggering of sub-grid-scale convection at day 5, or both. If, when the ensemble predicts a 70% chance of rain, it actually rains 50% of the time, it would be difficult to determine which modelling aspect was to blame. Furthermore, given limited sample sizes and the fact that uncertainties grow exponentially in the medium range, it can be difficult to estimate q accurately enough for us even to be able to say with confidence that q ≠ p.

How diagnostics might avoid the problem

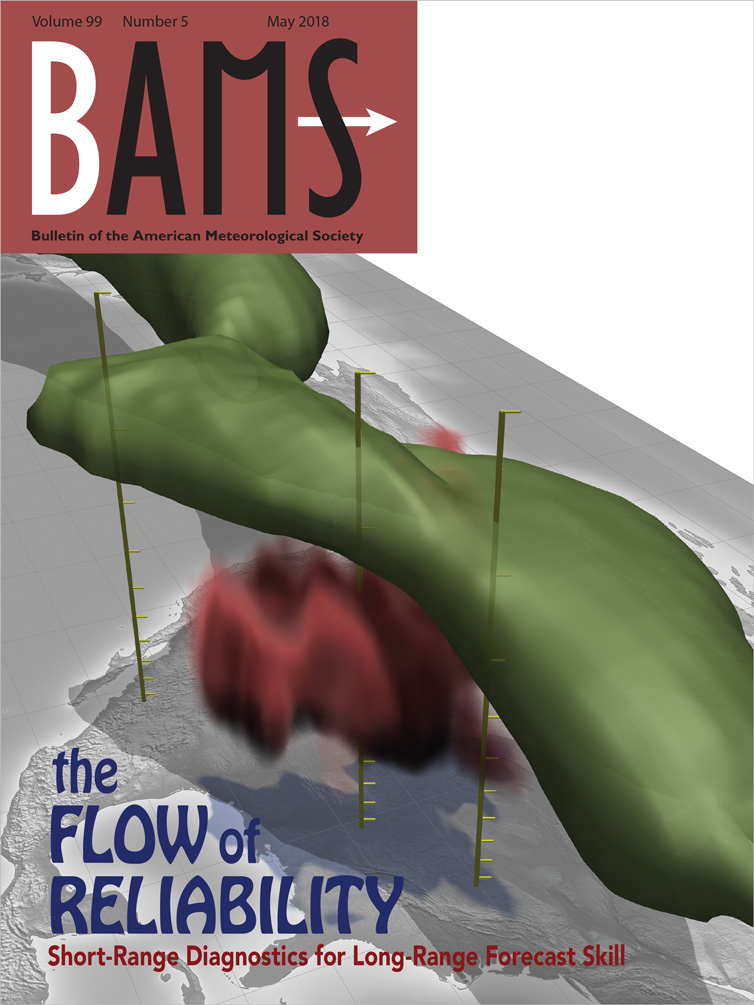

In our search to overcome these diagnostic difficulties, we asked whether it is possible to confidently identify a lack of reliability, and to indicate its causes, by diagnosis of a set of very short forecasts, all with a similar initial synoptic flow type in a given region. The flow type we discussed most is shown in the BAMS cover image (Figure 1). This 3-D visualisation is a composite of 54 cases with similar initial conditions over North America. The green tube represents the jetstream and the red “cloud” shows diabatic heating (from convection, radiative absorption, etc.) interacting with the jetstream. Uncertainty in whether mesoscale convective systems (MCSs) develop in these situations is thought to lead to uncertainties in the jetstream which, in turn, lead to increased ensemble spread and occasional poor deterministic forecasts over Europe several days later.

Figure 1. Cover image of the Bulletin of the American Meteorological Society, May 2018, depicting the jetstream (green tube shows where windspeeds are great than 23ms-1) and diabatic heating associated with convective systems (red cloud where heating is greater than 3Kday-1). Based on a composite of similar situations. Figure created with Met3D visualisation package (developed by Marc Rautenhaus, University of Munich) ©American Meteorological Society. Used with permission.

What we evaluated was the ensemble spread (variance) in the jetstream. Getting the spread “right” is necessary, if not sufficient, for reliability. The well-known “spread-error” relationship states that, averaged over a sufficiently large set of forecasts, the spread should match the error of the ensemble-mean. At short lead times, uncertainty in our knowledge of the truth is generally not negligible in the calculation of the error, and so we include this uncertainty in an extended variance relationship – very much as done in data assimilation. Our analysis is, in fact, applied to the prior forecasts, observations (here from aircraft) and estimated observation errors used within the Ensemble of Data Assimilations (EDA).

Results of the diagnostic approach

The results, for the situation depicted in Figure 1, suggest that the model greatly underestimates uncertainties in the jetstream, and thus an ensemble forecast will become unreliable if it “passes through” this flow type. We suggest that the underestimation of uncertainty is partly due to an underestimation of (stochastic) uncertainties in convection, and partly due to systematic convection errors. So, while forecast uncertainty is large for Europe in these situations, it may not be large enough. Furthermore, efforts to improve our model and our representation of model uncertainty in this convective flow situation should lead to improved forecast performance.

While such diagnosis is important for guiding system development, much collaboration with, and work by, modellers is required to achieve this. The method has already been useful in giving such guidance - for example it suggested that our representation of model uncertainty was over-active in clear-sky situations, and changes which address this issue have recently been implemented in the ECMWF forecast system.

The study published in BAMS benefitted greatly from international collaboration with Dave Parsons (Oklahoma University), who is an expert on mesoscale convection over North America, and ECMWF Fellow Heini Wernli (from ETH Zurich), who is an expert on warm conveyor belts (WCBs) – another flow type we considered – where warm moist air ascends ahead of a cold front and leads to large-scale precipitation (along with embedded convection).

A diagnostic framework for future forecast system development

The red shading in the animation (Figure 2) highlights flow types upstream of Europe where there is rapid growth of prior forecast spread in the upper troposphere (potential vorticity on 315K; PV315). Black dots show the ensemble-mean precipitation rate, and vectors show the 850 hPa winds from the unperturbed prior forecast (the control member of the EDA). The red contour shows where PV315=2PVU - this marks the northern extent of the jetstream. The two flow types mentioned above (MCSs and WCBs) are clearly evident. The “pooling” of uncertainty generated by both these features was no doubt responsible for the large ensemble spread at day 6 over Europe following this period. Over the North Atlantic region shown in Figure 2, as well as globally, there are many other flow types which might lead to unreliability in our ensemble forecasts.

Figure 2. Animation of the quasi-instantaneous growth rate in the Ensemble of Data Assimilations (EDA) prior forecast spread, with red shading indicating the synoptic flow types that act to enhance uncertainty the most. These are often associated with convection (dots indicate precipitation rate). Vectors show lower tropospheric winds (v850). The red contour shows where PV315=2PUV (potential vorticity on the 315K isentropic surface) and marks the northern extent of the jetstream. Shading shows the growth rate of the standard deviation in PV315 following the horizontal flow at 315K, and has been smoothed to emphasise synoptic spatio-temporal scales.

My current work is focused on producing an objective clustering of initial flow types, and ascertaining which of these leads to the strongest degradation in reliability. The key aspect is not the growth rate per se, but rather how well the model preserves reliability, and the frequency of occurrence of the flow type.

The refinement aspect, mentioned above, is also important for forecast performance, although possibly less sensitive to the modelling developments discussed above. Refinement is improved when, on average, the (flow-dependent) outcome frequencies, q, become closer to 0 or 1. This can be achieved, within the limits of predictability, through the assimilation of more observational information.

Ensemble weather forecasts are the foundation of what we do here at ECMWF and they have become more skilful over the years. However, there has been a limited range of diagnostics to help guide further improvements in such forecast systems. The diagnostic approach discussed above is far from precise and involves disentangling variances in the prior forecasts from observational error variances. Nevertheless, it should give scientists an indication of the most serious problems and help them to prioritise modelling efforts on aspects which might be expected to lead to the largest improvements in overall forecast performance.